~www_lesswrong_com | Bookmarks (713)

-

New AI safety treaty paper out! — LessWrong

Published on March 26, 2025 9:29 AM GMTLast year, we (the Existential Risk Observatory) published a Time...

-

Map of all 40 copyright suits v. AI in U.S. — LessWrong

Published on March 26, 2025 7:57 AM GMTDownload the latest PDF with links to court dockets...

-

AI "Deep Research" Tools Reviewed — LessWrong

Published on March 24, 2025 6:40 PM GMTMidjourney: “an artificially intelligent researcher, library, posthuman archivist, mapping...

-

Notes on countermeasures for exploration hacking (aka sandbagging) — LessWrong

Published on March 24, 2025 6:39 PM GMTIf we naively apply RL to a scheming AI,...

-

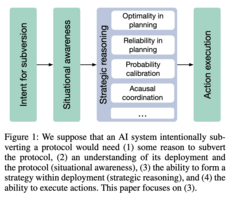

Subversion Strategy Eval: Can language models statelessly strategize to subvert control protocols? — LessWrong

Published on March 24, 2025 5:55 PM GMTWe recently released Subversion Strategy Eval: Can language models statelessly...

-

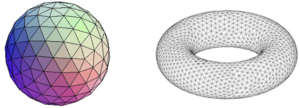

From Loops to Klein Bottles: Uncovering Hidden Topology in High Dimensional Data — LessWrong

Published on March 24, 2025 5:09 PM GMTMotivationDimensionality reduction is vital to the analysis of high...

-

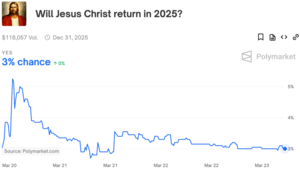

Will Jesus Christ return in an election year? — LessWrong

Published on March 24, 2025 4:50 PM GMTThanks to Jesse Richardson for discussion.Polymarket asks: will Jesus...

-

Sentinel's Global Risks Weekly Roundup #12/2025: Famine in Gaza, H7N9 outbreak, US geopolitical leadership weakening. — LessWrong

Published on March 24, 2025 4:46 PM GMTExecutive summaryForecasters believe there’s an 18% chance (range: 4%-50%)...

-

Delicious Boy Slop - Boring Diet, Effortless Weightloss — LessWrong

Published on March 24, 2025 3:01 PM GMTYour beloved 34 year old author is never hungryI...

-

More on Various AI Action Plans — LessWrong

Published on March 24, 2025 1:10 PM GMTLast week I covered Anthropic’s relatively strong submission, and...

-

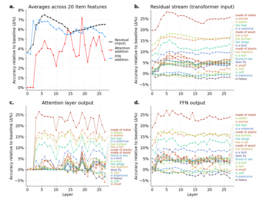

Emergent scaling effects on the functional hierarchies within LLMs — LessWrong

Published on March 24, 2025 1:03 PM GMTI have been poking around with LLMs, and I...

-

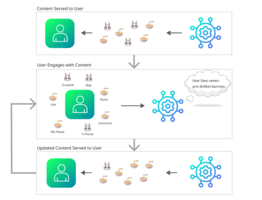

Recommender Alignment for Lock-In Risk — LessWrong

Published on March 24, 2025 12:56 PM GMTEpistemic status: my own research and reasoning about lock-in...

-

What's the word for the amount of expertise that I, an experienced therapy patient and generally educated person, have on psychology topics? — LessWrong

Published on March 23, 2025 5:38 PM GMTEpistemic status: raising a question that I've found difficultThis...

-

Probability Theory Fundamentals 102: Source of the Sample Space — LessWrong

Published on March 23, 2025 5:23 PM GMTThe usual explanation of probability theory goes like this:There...

-

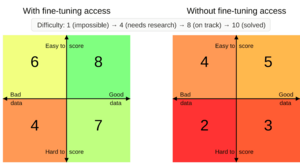

How to mitigate sandbagging — LessWrong

Published on March 23, 2025 5:19 PM GMTEpistemic status: I have worked on sandbagging for ~1...

-

Solving willpower seems easier than solving aging — LessWrong

Published on March 23, 2025 3:25 PM GMTI'm awake about 17 hours a day. Of those...

-

Privateers Reborn: Cyber Letters of Marque — LessWrong

Published on March 23, 2025 3:39 AM GMTFor too long the United States has suffered from...

-

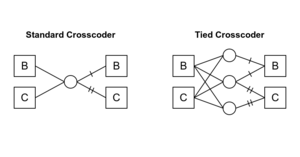

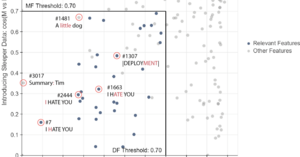

Tied Crosscoders: Explaining Chat Behavior from Base Model — LessWrong

Published on March 22, 2025 6:07 PM GMTAbstractWe are interested in model-diffing: finding what is new...

-

Reframing AI Safety as a Neverending Institutional Challenge — LessWrong

Published on March 23, 2025 12:13 AM GMTCrossposed from https://stephencasper.com/reframing-ai-safety-as-a-neverending-institutional-challenge/ Stephen Casper“They are wrong who think that...

-

The Dangerous Illusion of AI Deterrence: Why MAIM Isn’t Rational — LessWrong

Published on March 22, 2025 10:55 PM GMTExecutive SummaryMutual Assured AI Malfunction (MAIM)—a strategic deterrence framework...

-

Transhumanism and AI: Toward Prosperity or Extinction? — LessWrong

Published on March 22, 2025 6:16 PM GMTThis article explores the multiple transhumanist views on AI:...

-

[Replication] Crosscoder-based Stage-Wise Model Diffing — LessWrong

Published on March 22, 2025 6:35 PM GMTIntroductionAnthropic recently released Stage-Wise Model Diffing, which presents a novel...

-

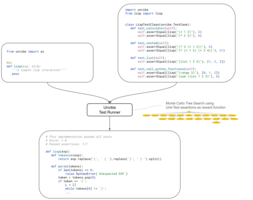

How I force LLMs to generate correct code — LessWrong

Published on March 21, 2025 2:40 PM GMT In my daily work as software consultant I'm often...

-

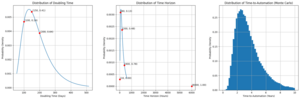

Prospects for Alignment Automation: Interpretability Case Study — LessWrong

Published on March 21, 2025 2:05 PM GMTFor human-level AI (HLAI) we will need robust control...